Compact Inertial Measurement Unit

This project examines the uses of inertial measurement units for gesture sensing and user feedback applications. The overall goal is to create a class of devices that have some sense of their own movement and can give feedback to a user given certain patterns. Imagine shoes that beep when you are overrotating, golf clubs that yell "Slice!" when you are about to slice, or even juggling balls that can teach you how to juggle.

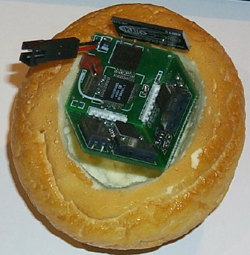

To reach this goal, we require not only sensors but a framework for interpreting their data and simple effective feedback channels. Accordingly, a simple scripting package has been created to recognize combinations of simple "atomic" gesture components in data from body-mounted sensors. Much as the way in which phonemes combine to form words, the user is able to specify a script of sequential microgestures that form a macroscopic gesture. When sensor data makes a good match with a specified script, the gesture is detected. This algorithm is extremely efficient and is able to run in real time on a low-end PDA, for example. A pair of small, wireless, handheld, 6-axis inertial measurement units (as shown here) were constructed to capture gesture and test this framework.

Note that the hardware was first developed as the input controller for the Void* installation by the Synthetic Characters Group (which explains perhaps why it is housed in a bread bun). Here's a photo of the Void* installation in action, and here's a short video clip (Quicktime, 832K).

Publications and Presentations about this project:

An Inertial Measurement Framework for Gesture Recognition and Applications, Ari Y. Benbasat and Joseph A. Paradiso. In Ipke Wachsmuth, Timo Sowa (Eds.), Gesture and Sign Language in Human-Computer Interaction, International Gesture Workshop, GW 2001, London, UK, 2001 Proceedings, Springer-Verlag, Berlin, 2002, pp. 9-20.

An Inertial Measurement Unit for User Interfaces, Ari Y. Benbasat, MS Thesis, MIT Media Lab, September 2000.

Institute

of Navigation Talk by Ari Benbasat - 01/2001 (Powerpoint)

Short Talk on Ari Benbasat's S.M.

Work (Powerpoint)

Return to the Responsive Environments Group Projects Page